API Support

Just set thecache_control param in your respective message body:

Prompt Templates Support

Set any message in your prompt template to be cached by just toggling theCache Control setting in the UI:

Cache TTL Options

By default, the cache has a 5-minute lifetime that refreshes each time cached content is used. You can optionally specify a 1-hour TTL by adding thettl field to cache_control:

| TTL | Write Cost | Best For |

|---|---|---|

5m (default) | 1.25x base input price | Prompts used more frequently than every 5 minutes |

1h | 2x base input price | Agentic workflows, long conversations where follow-ups may exceed 5 minutes |

- The message you are caching needs to cross minimum length to enable this feature (1024 tokens for Claude 3.5 Sonnet and Claude 3 Opus, 2048 tokens for Claude 3 Haiku)

- You can mix both TTLs in the same request, but 1-hour entries must appear before 5-minute entries

- Up to 4 cache breakpoints per request

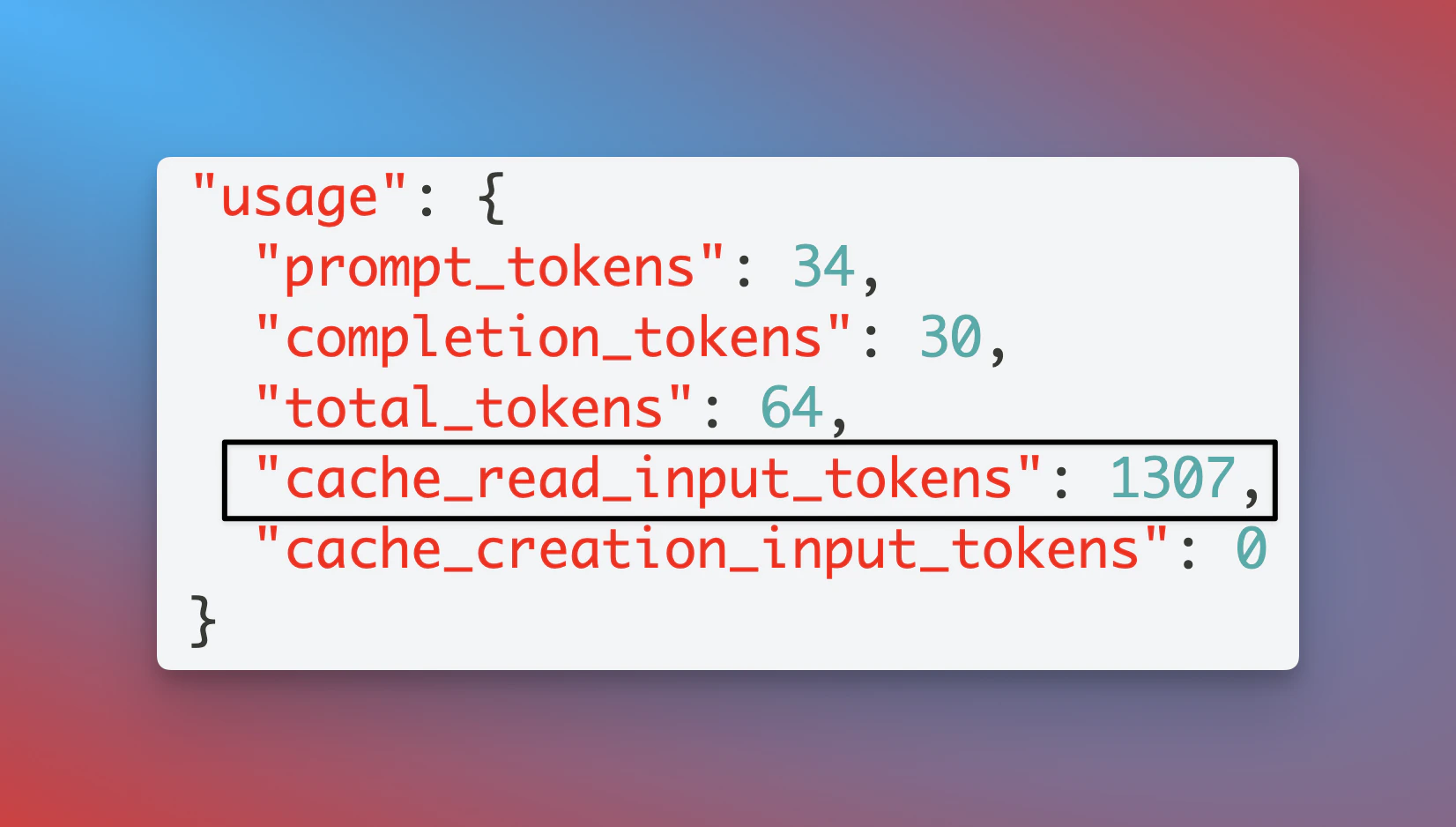

Seeing Cache Results in Portkey

Portkey automatically calculates the correct pricing for your prompt caching requests & responses based on Anthropic’s calculations here:

usage parameters:

cache_creation_input_tokens: Number of tokens written to the cache when creating a new entry.cache_read_input_tokens: Number of tokens retrieved from the cache for this request.

Understanding Token Counts with CachingPortkey normalizes Anthropic’s response to the OpenAI format. In this format, This differs from Anthropic’s native format where

prompt_tokens includes the cached tokens:inputTokens excludes cached tokens. Portkey’s pricing calculation accounts for this by:- Subtracting cached tokens from

prompt_tokensto get the base input token count - Applying the standard input token rate to base tokens

- Applying the discounted cache read rate to

cache_read_input_tokens - Applying the cache write rate to

cache_creation_input_tokens